ARTIS zoo residency Driessens / Verstappen

Posted May 24, 2022 byMachines spotting birds

Birds hold a particular fascination for us humans. Spotting them is a favoured activity for many. Perhaps it has to do with their elusiveness as flying beings or temporary visitors, that we cherish the moments of close encounters. In 2018 Maria and Erwin were inspired to extend this human activity to the nascent world of machine learning with their Spotter project. The first iteration of the Spotter was introduced to Amstelpark in 2018 during our Machine Wilderness residencies at Zone2Source, which formed a prelude to the more extensive residency programme at ARTIS Amsterdam Royal Zoo which eventually started in 2022 after a Corona delay.

Artificial animal portraits

When Erwin and Maria speak of the Spotter it is clearly placed in a long tradition of nature observation and depiction by artists in the zoo. But it operates differently Maria explains because it isn’t looking for an idealised pose. Humans tend to depict animals in attractive poses, where all the parts of the body are clearly visible, but the machine is just taking pictures in any pose, even when the animal has turned its back to us, as the Mandrills often do. We had a full day of asses there Maria laughs. From the images the Spotter collects, it is training at night to generate its own interpretation of what that particular animal looks like. Having a limited sized brain forces the machine to improvise. So the objective is less on perfect identification as is usually the case with image classifying software, but more on its generative potential. The research will result in short films that take us from the earliest renderings when the machine knows little about what it is seeing, until the latter stages when its visualisations become increasingly detailed and also recognisable to us humans.

If the imaginative power of the Spotter gains from limited brainpower, one wonders what that means to the worldview of organisms like insects which also have to cope with limited amounts of synapses. Does that give them a huge imagination?

Efficiency is in the eye of the beholder

When Maria and Erwin are setting up by the giraffes the Spotter is immediately drawn to a pigeon. The Spotter identifies a selection of animals set by Erwin and Maria. So they ‘switch off’ birds as they have ‘switched off’ other animals and in fact humans, so the attention of the Spotter is only grabbed by the main species of interest. It is the great advantage of working in the zoo, Erwin explains, that we can expose the Spotter to such a wide variety of species and the design of the enclosures makes them highly visible. In the wild it would be much harder to get footage this quickly. But there still are many challenges. When the giraffes pass behind a large tree trunk sometimes the Spotter identifies the head sticking out behind the opposite side of the tree trunk as a separate giraffe. It thinks there are two. Ideally the machine might understand body-structure of animals; the ability to tell what is a leg, tail or head. But making the machine ever more effective is also somewhat unsettling. It starts to feel like surveillance with military precision.

How to teach a machine to spot an animal

In reality the Spotter has made many humble steps to get to its current level of performance. In the early days during the preparatory session at Zone2Source in Amstelpark in 2018 Erwin was first just trying to get the machine to distinguish any moving object from a background, by swinging a roll of tape in front of the camera. A machine doesn’t know object from background, it can’t distinguish between the animal moving or just shadows of a tree moving in the wind, or it doesn’t understand that when an animal turns around it is still the same thing. All those kinds of steps have to be painstakingly built. Things the human eye takes for granted. Particularly striking was to hear how for the machine it wasn’t at all obvious that when you zoom in on an animal, that it is still the same scene, because really so much changes within the image.

At the zoo a main focus was to make the machine persistent. When it ‘loses’ an animal it doesn’t immediately start a new search, but it is patient; something was here recently, so let's keep looking. That way it has more time to refind the animal. And we see it happening at the Alpine ibex. It finds an ibex laying on the rocky surface in the distance. A rectangle appears around the body of the animal shortly and disappears again. The camera waits and yes, it is seeing the animal again, the rectangle returns. It continues to zoom in until it can take a good sized photo.

Background flavours

A main benefit of having a residency of a few weeks at the zoo is spending entire days at specific locations, where a typical visitor only spends a short time with a species. This deepens your appreciation for the animals and there are surprises. The Meerkats seem able to spot birds and even airplanes flying at very high altitude, or small birds collecting fur that the Alpine ibex are shedding.

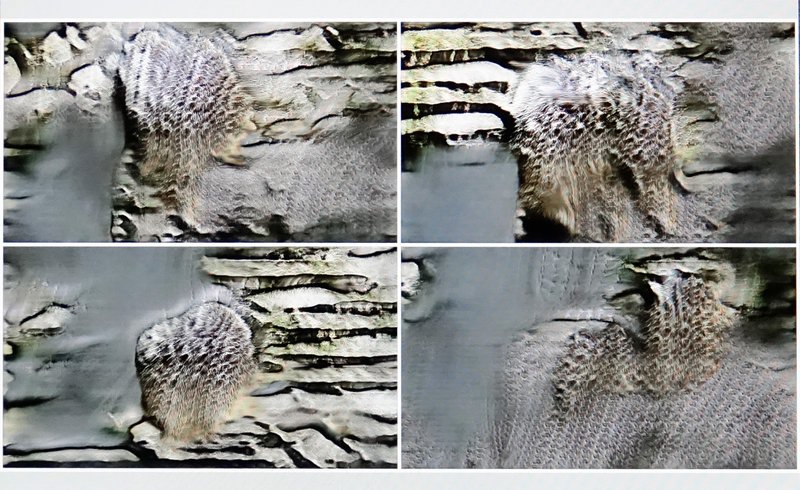

Perhaps a disadvantage to working in a wild habitat is that the background behind the animal is quite homogenous in a zoo. When you see images of Alpine Ibex online you find them with amazingly rich backdrops of mountain peaks, streams, rock formations or fields full of herbs. The range of contexts is more limited in a zoo. When it sees a Meerkat it sees either sand, or a particular rock formation behind it. So the animal becomes rather engrained with the background. Working in a full landscape might challenge the imagination of the Spotter more.

Drawing a blank

In preparation for the residency, the work by Maria and Erwin on the Spotter hasn’t generated one specific neural network, but rather a massive family of related networks. For different locations and conditions they apply different networks. Many however at some point collapse, when the network no longer renders anything that is even remotely like the animal in question. Its imagination suddenly collapses and it only draws vague monochromes like an abstract painter. As if the network has gone ‘fully Barnet Newman’ on us. Normally the network doesn’t really recover from that, so it becomes redundant. Even some artificial artists can suffer from over exposure it seems.

1dmm+2gmm_k5_0.0002+0.0002_19.2+18.0M_lsgan_b4

Is the name of one of the favourite networks Maria and Erwin have built. Favoured because of the impressionistic images it generates. When we are standing by the zebras and giraffes Maria and Erwin are already excited by the prospect of letting 1dmm+2gmm loose on the material. It is particularly good at combining a rich diversity of features because its seems to take them from a wider range of sources. And the more favoured networks also generate interesting stuff in a relatively short time. 1dmm+2gmm only needs one days worth of material to already generate interesting looking images. It’s not a very memorable sounding name, but Erwin breaks it down into parts:

- 1dmm+2gmm refers to: 1 'mm' discriminator step + 2 'mm' generator steps,

- 'mm' stands for 'makemix' which is a one of a family of network architectures,

- k5 refers to: the size of its filters, 0.0002+0.0002 refer to: the learning speeds of the generator and discriminator networks,

- 19.2+18.0 refers to: the number of parameters per network, in millions,

- b4 refers to batch size: if it learns from only 1 image it tends to be erratic, b4 indicates that it learns from four images simultaneously, which gives it a more steady direction.

Residency reflections

Observing animals for multiple days has changed my view about the zoo Maria says. It is like visiting another world and every day is different. It is like a strange dream to have all the animals here. You see how animals react to changing conditions. You start to see subtle indications of hierarchy among the animals that share an enclosure. You slowly get to know some of the caretakers. It is a commercially run park of course, but you see how care for the animals really is first priority here. We really found out how nice it is to spend long periods with specific animals. With that experience, I will be a different zoo-visitor forever.

Created: 24 May 2022 / Updated: 24 May 2022